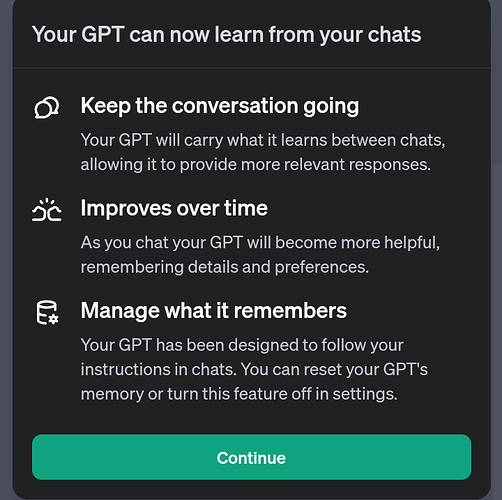

I just saw a popup explaining a new memory feature. Found this article about it looking for more information:

I love this and believe it has been remembering things in between sessions before seeing this prompt, but ever time I ask it it still claims not to remember. I don’t see any documentation anywhere about this memory between sessions. To optimize prompts, it would be good to know how to engage it best so we can help it learn things important to me as an end user. I didn’t see much in the forum about this topic. I appreciate any feedback or direction on memory retention between sessions. What memory is maintained, and what can we control? GPT replies I don’t remember things when I ask.

1 Like

Agreed, I’d love to learn more too, and understand this better. I’m excited for the update. I do seem to have the ability to check the temporary chat now when selecting the model from the drop down list, and the settings appear to state that it can now learn and remember from the chats. However, when attempting to create new chats and carry information from a newly created chat to another newly created chat, I’m unable to replicate this. Is the update not rolled out yet, is it just the settings window that was updated?

I’m not certain I can verify this is working on my end.

1 Like

I got the same popup.

Saw the feature in the settings.

Went to test it, with no results.

Checked the settings again, and the feature has disappeared.

I think they are either currently updating it or it was an internal leak.

2 Likes

I saw it too. I turned on the setting. I tried the “Learn more” link but it was broken. Then access to the setting disappeared a few minutes later.

1 Like

Maybe it is just a disclaimer since they are training their training models on our messages so they need to state that for transparency? Not sure.

I have made a few posts here about memory retention. I have caught the model referencing information from a previous session on the very first post. I have also seen code from previous sessions referenced. Everything about the GPT memory management and claims by the model to not retain when it does, then a vague announcement about memory with zero supporting detail is either lazy or sketchy. Keep asking questions! If enough people ask maybe they will comment on it.

1 Like