OpenAI is scheduled to deprecate DALL-E-3 on 05/02/2026:

On November 14th, 2025, we notified developers using DALL·E model snapshots of their deprecation and removal from the API on May 12, 2026.

Shutdown date Model / system Recommended replacement 2026-05-12 dall-e-2gpt-image-1orgpt-image-1-mini2026-05-12 dall-e-3gpt-image-1orgpt-image-1-mini

Here is the problem: While gpt-image-1 is a great model, it cannot do what DALL-E-3 does. Example:

A simple prompt:

It is daytime. The sun is very bright, the sky is blue, and white “pillow” clouds are floating high in the sky. A beautiful, colorful and majestic dragon is purched on a large bolder looking down into a lush green valley surrounded by a tall pine forrest.

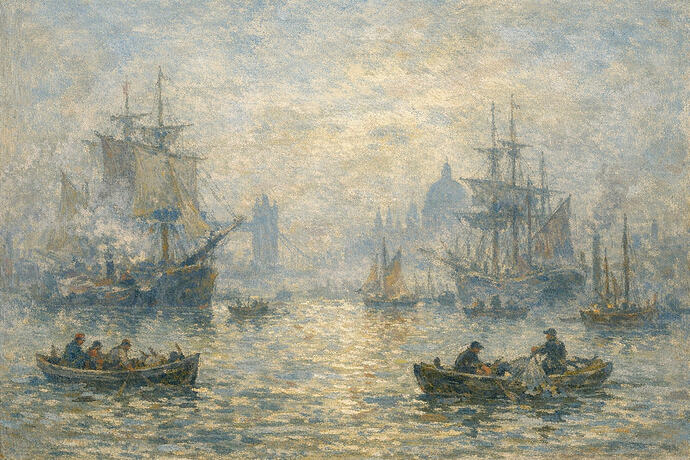

DALL-E-3

GPT-Image-1 (same prompt)

Which one do you like better?

Don’t get me wrong: GPT-Image-1 can create some truly amazing images and image edits. I’ve done it myself as well as others (better than me). But prompting GPT-Image-1 requires a “skill set” that is difficult at best. Maybe this is what OpenAI wants - a token eater.

Maybe OpenAI can modify the GPT-Image-1 API to have a dall-e-3 mode.

All I can say is that I’m done with burning tokens with GPT-Image-1 just to get an acceptable image.