GPT-4 is producing short and incomplete responses. this has been consistent and ongoing for over a week now. This is a paid service and should provide much more value than 20 requests that output no more than 3 to 4 paragraphs. After running some tests only 200 tokens are being produced per reply.

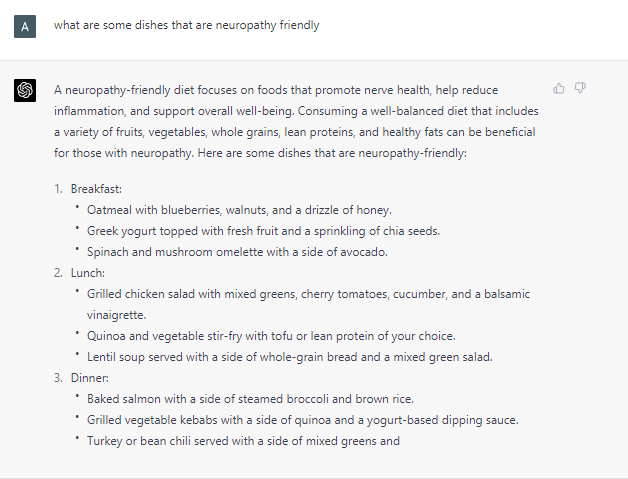

another example

Hi @alexbelotsky, welcome to the forum!

To increase the length of your response you can try to integrate something like “reply with X paragraphs of text” or “Reply with X words” or “give me a detailed response”. The model tries to answer your requests as concise as possible because that is what most people want. So if you tell the model you want a longer answer you’ll receive it in most cases.

Secondly GPT4 is not what you buy when you buy the ChatGPT Plus thing. GPT4 is more like a add-on. In my experience for most usecases GPT3 performs sufficiently ![]()

Thank you for the reply. The issue is that all the output is being cut off mid word. This was not the case a week ago. See the examples below of not only short length compared to a week ago, but constant incomplete responses.

Compare that to GPT-4 Responses a week ago. Current replies are much shorter and almost always incomplete.

Ah I have a Idea why this might be the case ![]()

Are you using the same chat all the time? I ask this question because for GPT3 the whole content of the conversation was re-submitted when you posted a new message. So the deterioration in performance might also be related to a increase in needed computing power because the more content is submitted the harder it becomes to answer the messages.

So you could try to start a new conversation and see if this issue persists

Here is an example of the issue persisting with a brand new prompt. Note the cut off at the very end. GPT-3 does not seem to exhibit the same issue. I have tried with multiple browsers, multiple machines, and over the course of the last 4 or 5 days, all with the same partial results.

Thank you for sharing and testing @alexbelotsky, quite puzzeling - if I find a answer somewhere I’ll let you know!

If this was with the API we could have tried some further steps but the GUI is quite limited regarding what you can do to identify such issues…

I have been facing this issue the past 2 days. I’ve tried new chats, but the problem persists. Sometimes I will come back 15 minutes later, and the answer will be finished, other times it’s perma-frozen. Now I just reply with continue, hopefully it doesn’t take 2 of my 25 allotted questions ![]()

I have figured out a way to get complete responses if other methods fail. Type this after you get an incomplete response:

What comes after “copy the last line of output and put it in quotes here”? Your response was incomplete.

It’s that simple, that’s all you need to do. Now, you may get cut off again if it’s a very long response, but just keep repeating the same process and you will eventually get what you need.

If

200+/- tokens seems to be the limit

So I would like to know it ![]()

For a better User Experience, display a message with this limitation to the user.

Me: Which of Jakob Nielsen’s heuristic criteria does this case meet? “ChatGPT provides an answer but it is partial: the writing of the answer is interrupted. At the system level, this is due to the limitation of the number of tokens. To help the user understand this limitation, a “Warning” (or “Informative”?) message is displayed.”

Chat GPT-4: In this case, several of Jakob Nielsen’s heuristics are involved:

- Visibility of system status: Displaying a warning or informative message to explain the token limitation and the partial response allows the user to understand the situation and be informed about the system’s status.

- Consistency and standards: The message should be presented consistently with other messages and user interface elements, adhering to established conventions and standards for warnings or informational messages.

- Help users recognize, diagnose, and recover from errors: Although the partial response is not an error per se, displaying an explanatory message helps the user understand the situation and take steps to address the issue, such as rephrasing the question to get a complete answer within the available token limit.

- Help and documentation: The warning or informative message also acts as help for the user, explaining the system limitation and possibly providing suggestions for addressing the issue or obtaining additional help.

Me: What might this message be to a ChatGPT user who is not necessarily a computer expert?

Chat GPT-4: To a ChatGPT user who may not be a computer expert, the message could be crafted in simple, non-technical language to ensure clarity and ease of understanding. Here’s an example of such a message. Here’s an example of such a message: “Oops! It looks like the response was cut off due to character limits. Please try rephrasing your question or asking a shorter one to get a more complete answer. We apologize for the inconvenience.”

This message is concise, easy to understand, and offers a solution to the user without using technical jargon like “tokens.”

I’ve been experiencing an issue with ChatGPT4 for the past two days where it gives incomplete responses, and it’s been quite frustrating. To make matters worse, when I ask it to continue or complete the response, it counts towards the 25 message limit. Can you please look into this issue and fix it as soon as possible? Thank you for your assistance.

I’ve been experiencing the exact same issue (getting complete responses first, and since the 17th of March I only get the short responses). I use new chats, delete old ones, nothing helps. I think it is not a problem on the user side, but I interpreted it as a limitation from server side, as the amout of plus users with GPT4 access has increased (e.g. by the rollout to India on exact the day I experienced the cutted messages the first time).

Please help to solve this issue.

Thank you! ![]()

Yup facing the same issue… I think they have done something

Same issue, exact same issue GPT3 was getting first few weeks after release. Its also getting worse each day. The OP’s examples are far longer than anything I’ve got today. Seen posts from a few people saying they have 2 accounts, 1 has this issue, the other does not. Might be making this up, but I thought these models produced the whole output at once, and the word by word effect on the screen is just visual to slow down the user, but am not sure about this. If thats the case I think this is a network issue rather than a change to the model.

Same issue. Answers get cut mid word. Up

This issue is highly correlated with the increasing slowness of GPT-4. The slower it gets the earlier it cuts off. For the past two days it’s gotten to the point that it won’t even generate a response due to server errors. All of these problems have the same root cause.

Having the same problem. Short and incomplete answers