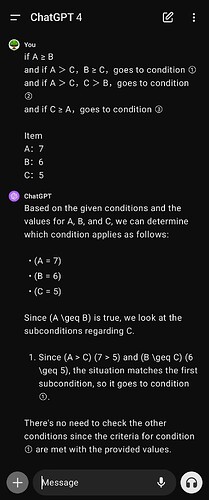

Here is my logic

if A ≥ B

and if A > C,B ≥ C,goes to condition ①

and if A > C,C > B,goes to condition ②

and if C ≥ A,goes to condition ③

Item

A:7

B:6

C:5

GPT4 tells me thatA(7) ≥ B(6),C(5)>B(6),so it goes to condition ②

While the correct answer is evidently ①, which confuses me so much.

I NEED HEEEEEELPPPPPPPPP

The detailed structure is as follows ( you can try it mutiple times in a json output format!)

______________(Prompt)

Your job is to give the final reply to the franchisee according to the information in [DATA] and follow rules of [RULES] to tell the franchisee which product goes to which condition ① or ② or ③.

[RULES]

if A ≥ B

and if A > C,B ≥ C,goes to condition ①

and if A > C,C > B,goes to condition ②

and if C ≥ A,goes to condition ③

[DATA]

item id:Fireworks 100g(5290)

A:8

B:6

C:10

item id:Soil 200g(5841)

A:10

B:5

C:10

item id:Bread 300g(2841)

A:6

B:5

C:5

Return the structure of json:

{

"data": [{

"explain":"string",

"itemnum":"string",

"condition": "string",

}, {

"explain":"string",

"itemnum": "string",

"condition": "string",

}]

}

- json definition:

- explain: lay out your reasoning process in detail

- itemnum:item id

- condition: ① or ② or ③

GPT4 : OUTPUT

The incorrect reply:

{

“data”: [

{

“condition”: “③”,

“explain”: “For Fireworks 100g, A is 8, B is 6, and C is 10. Since C is greater than A, it goes to condition ③.”,

“itemnum”: “5290”

},

{

“condition”: “①”,

“explain”: “For Soil 200g, A is 10, B is 5, and C is also 10. Since A is equal to C and A is greater than B, it goes to condition ①.”,

“itemnum”: “5841”

},

{

“condition”: “①”,

“explain”: “For Bread 300g, A is 6, B is 5, and C is 5. Since A is greater than C and B, and B is equal to or greater than C, it goes to condition ①.”,

“itemnum”: “2841”

}

]

}

The correct reply:

{

“data”: [

{

“condition”: “③”,

“explain”: “For Fireworks 100g, A (8) is not greater than or equal to C (10), so according to the rules, it goes to condition ③.”,

“itemnum”: “5290”

},

{

“condition”: “③”,

“explain”: “For Soil 200g, A (10) is equal to C (10), and since A is greater than or equal to B (5), it also goes to condition ③.”,

“itemnum”: “5841”

},

{

“condition”: “①”,

“explain”: “For Bread 300g, A (6) is greater than B (5) and A is greater than C (5), with B also being greater than or equal to C. Therefore, it fits the criteria for condition ①.”,

“itemnum”: “2841”

}

]

}

I ran it 10 times, it seems to me 80% it told me the second product should go to condition ① which i think should be condition ③ and im so confused right now