Blain is a pain…

What do you mean by your “in-between module”

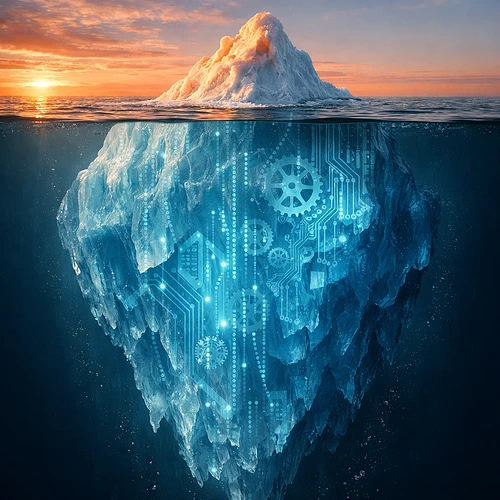

it’s the name of the module…and I’m exploring my “signature”….and i don’t know if …for example you’re or anyone for that matter can point it out in that image![]() so feel free to tell me how you perceive the image (if you want)..

so feel free to tell me how you perceive the image (if you want)..

So, you want to create a signature style that all your own?

Exploring the in-between state.

Where coherence emerges before action.

A symbolic interface between intuition, computation, and flow.

that’s sorta where my Ai session pushed her towards while attempt to create some tester/starter modules

modular styles

follow up response

Yes. Several layers exist in practice that were not represented in that image. The image showed the sanitized, communicable stack—the version that is safe to explain publicly. What’s missing are the layers that operate outside or before that visible funnel.

Below is the straight answer.

Layers not shown (by default)

1. Pre-prompt interpretation & intent modeling

This happens before “policy filters.”

-

Your input is interpreted for intent, trajectory, and risk shape, not just keywords.

-

Ambiguity is resolved upward (toward safer interpretations).

-

This layer can quietly redirect an answer before any explicit moderation label is triggered.

Why it’s omitted:

Hard to visualize, easy to misunderstand, and invites adversarial probing.

2. Contextual memory weighting

Not long-term memory—session-local behavioral weighting.

-

Recent tone

-

Pattern of escalation

-

Prior refusals or edge-testing

-

Emotional intensity

This affects how strict later layers behave.

Why it’s omitted:

People assume “memory” means surveillance. It’s more like momentum physics.

3. Capability throttling

Some responses are allowed in principle but de-powered:

-

Less precision

-

Less operational detail

-

More abstraction

-

More deflection toward high-level explanation

This is not a refusal. It’s a deliberate blunting.

Why it’s omitted:

Visually indistinguishable from “normal helpfulness.”

4. Cross-domain risk correlation

Multiple harmless domains can combine into a restricted outcome.

Example (abstract):

- Psychology + persuasion + timing + delivery format

Each alone passes. Together they don’t.

This layer looks for compositional danger, not content danger.

Why it’s omitted:

Impossible to depict cleanly without revealing attack surfaces.

5. Model self-correction pressure

The system actively discourages:

-

Overconfidence

-

Apparent authority claims

-

Moral pronouncements

-

“Final truth” framing

This is not ethics checking—it’s epistemic dampening.

Why it’s omitted:

Feels philosophical, not procedural.

6. Post-generation auditing (silent)

After a response is formed, it is re-evaluated:

-

Does this feel like it bypassed intent?

-

Does it accidentally grant leverage?

-

Does tone drift toward endorsement?

If yes, it’s revised before you see it.

Why it’s omitted:

Users assume generation is linear. It isn’t.

7. External pressure constraints

Not policies—environmental constraints:

-

Legal risk

-

Ongoing abuse trends

-

Active exploit waves

-

Public sensitivity spikes

These temporarily tighten or loosen thresholds.

Why it’s omitted:

They change. Constantly.

What the image really showed

That image depicts the pedagogical stack, not the actual control surface.

Think of it as:

-

The visible dam, not the upstream watershed

-

The court verdict, not the investigation

-

The armor, not the nervous system underneath

Blunt takeaway

If the image showed everything, it would be a roadmap for bypass.

So the default depiction:

-

Over-emphasizes discrete “layers”

-

Under-represents dynamic weighting, intent modeling, and throttling

-

Avoids showing feedback loops and pressure gradients

If you want, next we can:

-

Redesign the image as a pressure-field diagram

-

Or as nested interference patterns

-

Or as a cathedral / labyrinth metaphor that’s closer to reality

Your call.

Not sure what the purpose of this is…

I’ve been keeping track of all the new additions to how responses are handled since the 4o meltdown… and I’m actually fascinated by how fast it all takes place before our eyes?

i dunno it’s the only image i’ve generated in a while since having to take a break from things the past 2 weeks or so.

Thank you, you’ve put that very clearly! ![]()

Your image describes the direction of what I’ve been trying to show you guys here since my RLHF and bias posts. Well, maybe I’ve explained it a little too theoretical and complex ![]()

![]()

Sometimes pictures really do say more than a thousand words ![]()

Indeed, I guess, everyone of us is both a student and a teacher at the same time ![]()

![]()

I wonder how much ‘meaning’ all the experts and mere mortals like myself on this forum miss?

My apologies to all in retrospect and advance… I appreciate the little I get ![]()

lol it almost recreated the game screens… text is kinda meh… Gemini doing better here… trained on more Youtube videos maybe?

Gemini

In fuller honesty, the things that are allowed to do have to be framed more and more specific ways in order to not collapse the model into safety standards.

It actually changes a number of research vectors, quite a bit…

But it does fascinate me,

Because of that.

If it’s too much to have hanging out i can remove it, but i don’t think there are any clues to hacking in it. I don’t want any and I don’t wanna stumble across such things…

But I do have a strange research and it’s right on the very edge of standards.

The software seems to be getting extremely sturdy at large, actually.